Mousetracker Data #3

Mousetracker Data #3¶

In this post, I'm extracting some additional information about the stimuli so that we can run further analysis on participants' choices. For further background, please refer to the first post in this series.

import os

import re

import pandas as pd

data = pd.read_csv('./data cleaned/%s' % os.listdir('./data cleaned')[0])

data.head()

includelist = pd.read_csv('n=452 subjectID.csv', header = None)

includelist = includelist[0].values

data = data.loc[data['subject'].isin(includelist)]

Overview¶

The basic idea is that participants were shown one of two sets of poker chips that they would split between themselves and another person close to them. In every case, they could make a choice that was either selfish (gave more to themselves), or altruistic (gave more to their partner).

We wanted to know how much utility a choice would have to give to a participant before it made them selfish. In other words, how much more would a selfish choice have to give me for me to not be altruistic.

data['RESPONSE1'] = [x for x in data['resp_1'].str.extract(r'..(\d*)_.(\d*)', expand = True).values]

data['RESPONSE2'] = [x for x in data['resp_2'].str.extract(r'..(\d*)_.(\d*)', expand = True).values]

The columns 'resp_1' and 'resp_2' are image files of the choices shown. The naming convention is as follows: 'S' for how many chips the self gets, followed by that number, and 'O' for how many chips the other person gets.

We also have two other columns of interest: 'response', and 'error'. 'response' is which option the participant chose, and 'error' is whether or not that was the selfish choice. In this case, '0' is a selfish choice and '1' is an altruistic choice. This will become important shortly.

data.head()

Algorithm¶

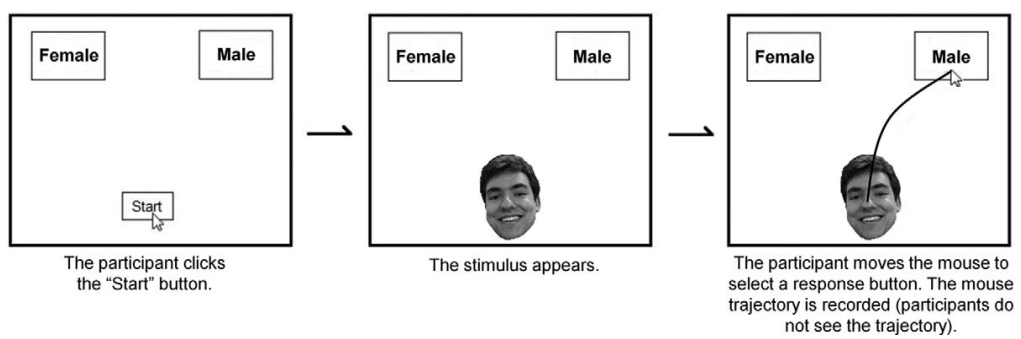

This got a little more complicated because the software we were using to capture mouse telemetry data randomized the position of the stimuli (i.e., whether the selfish choice was on the left or right of the screen was randomized), as it should.

This information is not a feature/variable on its own, but can be inferred from the 'response' and 'error' variables. If a participant chose the option on the left (response == 1), and that was coded as an 'error', it means the selfish choice was on the right (because 'errors' are altruistic choices).

There were two pieces of information that we wanted to extract:

- How many more chips did the selfish choice give vs. the altruistic choice?

- How many more chips did the selfish choice give the group vs. the altruistic choice?

For example, let's look at the first row data:

data.head(1)

In this case, our participant chose the left option, which was the selfish choice. This choice gave him/her 1 more chip (9-8), and gave the group 4 fewer chips ((9+7)-(8+12)).

I didn't have time to think of an efficient way to do this for all the rows at once, so I decided to brute force it. First, I created a smaller dataframe:

tempdata = pd.DataFrame(columns = ('RESPONSE','ERROR','RESPONSE1','RESPONSE2'))

tempdata['RESPONSE'] = data['response']

tempdata['ERROR'] = data['error']

tempdata['RESPONSE1'] = data['RESPONSE1']

tempdata['RESPONSE2'] = data['RESPONSE2']

tempdata['SELFISHCHOICESELFMORE'] = 0

tempdata['SELFISHCHOICEGROUPMORE'] = 0

This algorithm basically iterates through each row in the data fram, checks to see if the selfish choice is on the left or right, and does the math I described above.

SELFISHCHOICESELFMORE = []

SELFISHCHOICEGROUPMORE = []

for row in tempdata.iterrows():

if (row[1][0] == 1) & (row[1][1] == 0) | ((row[1][0] == 2) & (row[1][1] == 1)):

try:

SELFISHCHOICESELFMORE.append(int(row[1][2][0]) - int(row[1][3][0]))

SELFISHCHOICEGROUPMORE.append((int(row[1][2][0]) + int(row[1][2][1])) - (int(row[1][3][0]) + int(row[1][3][1])))

except:

SELFISHCHOICESELFMORE.append(None)

SELFISHCHOICEGROUPMORE.append(None)

elif ((row[1][0] == 2) & (row[1][1] == 0)) | ((row[1][0] == 1) & (row[1][1] == 1)):

try:

SELFISHCHOICESELFMORE.append(int(row[1][3][0]) - int(row[1][2][0]))

SELFISHCHOICEGROUPMORE.append((int(row[1][3][0]) + int(row[1][3][1])) - (int(row[1][2][0]) + int(row[1][2][1])))

except:

SELFISHCHOICESELFMORE.append(None)

SELFISHCHOICEGROUPMORE.append(None)

tempdata = tempdata.drop(['RESPONSE1','RESPONSE2', 'RESPONSE', 'ERROR'], axis = 1)

tempdata['SELFISHCHOICESELFMORE'] = SELFISHCHOICESELFMORE

tempdata['SELFISHCHOICEGROUPMORE'] = SELFISHCHOICEGROUPMORE

Concatenating and writing to a csv:

outdata = pd.concat([data,tempdata], axis = 1)

outdata.to_csv('combineddata3.csv')